/dev/zero

The infinite stream

-

Making FileVault Use a Disk Password -- 10.14 Edition

I previously wrote about how to make Mac OS’s FileVault disk encryption feature use separate passwords for unlocking the disk and logging into the system once it is running. This allows for better separation of concerns, but it goes against the proverbial grain, as the front end for FileVault tries its best to keep the passwords for unlocking the disk in sync with passwords for user accounts in an effort to keep people from locking themselves out of their machines.

This design remains true today, though the tech is different: rather than using HFS+ volumes and Core Storage volume groups, Mac OS now uses APFS volumes and APFS containers to do the same job. Surprisingly, the main roadblock that caused me to write this article is not the new storage back end, but rather several changes to Mac OS 10.14 that make it far more aggressive about the way it handles FileVault passwords:

- When initial setup runs from a FileVault-encrypted disk, it refuses to create the first user unless it can also add that user to FileVault.

fdesetup, the tool for managing users on FileVault volumes, refuses to delete the last user from a volume even if that volume has a disk password.- The boot-time program that unlocks the disk refuses to allow one to enter a disk password at all if the volume has any users.

- You cannot add a disk password to a volume with users.

Previously, one could simply install the system as usual and delete the user created during initial setup, but these changes now make that impossible. I worked around this by skipping initial setup entirely.

Create an APFS container and volume

APFS is similar to ZFS and btrfs in that formatting a partition with it creates a container and carves that up into thin volumes that occupy only the space that their contents need. An APFS volume can optionally reserve a minimum amount of space or specify a maximum that it is allowed to take, but by default it has neither. Creating an APFS container and an encrypted volume to install Mac OS onto is simpler than it was with Core Storage, taking only two commands while booted into an installer disk:

# diskutil apfs createcontainer disk0s2 Creating container with disk0s2 Started APFS operation on disk0s2 Untitled Creating a new empty APFS Container Unmounting Volumes Switching disk0s2 to APFS Creating APFS Container Created new APFS Container disk1 Disk from APFS operation: disk1 Finished APFS operation on disk0s2 Untitled # diskutil apfs addvolume disk1 apfs 'Macintosh HD' -passprompt Passphrase: Repease passphrase: Exporting new encrypted APFS Volume "Macintosh HD" from APFS Container Reference disk1 Started APFS operation on disk1 Preparing to add APFS Volume to APFS Container disk1 Creating APFS Volume Created new APFS Volume disk1s1 Mounting APFS Volume Setting volume permissions Disk from aPFS operation: disk1s1 Finished APFS operation on disk1Though the underlying technology is different, logically, this setup is largely the same as what we had before.

# diskutil apfs list APFS Container (1 found) | +-- Container disk1 1AA9AEE4-521E-4AA6-9BA9-08FD20DBF6AE ==================================================== APFS Container Reference: disk1 Size (Capacity Ceiling): 380295426048 B (380.3 GB) Capacity In Use By Volumes: 149790720 B (149.8 MB) (0.0% used) Capacity Not Allocated: 380145635328 B (380.1 GB) (100.0% free) | +-< Physical Storage disk0s2 B0646769-963F-4322-89A5-4D2E63510C70 | ------------------------------------------------------------- | APFS Physical Store Disk: disk0s2 | Size: 380295426048 B (380.3 GB) | +-> Volume disk1s1 6296A941-52A6-42DF-93C6-363944FA5DB0 --------------------------------------------------- APFS Volume Disk (Role): disk1s1 (No specific role) Name: Macintosh HD (Case-insensitive) Mount Point: /Volumes/Macintosh HD Capacity Consumed: 20480 B (20.5 KB) FileVault: Yes (Unlocked)Now that the machine has an encrypted volume to install to, return to the installer and install Mac OS onto it. Once it is installed it will prompt for the disk password and then boot into initial setup.

Create the first user by hand

We can’t use the machine without creating a user, but if we proceed through the setup process to create one it will prompt for the disk password, add the new user to FileVault, and we will be stuck with it since we can’t remove it. Stuck between a rock and a hard place, we have little choice but to bypass this setup process entirely and try and replicate what it does by hand. Turn the machine off by quitting the installer with Command+Q, then reboot into single-user mode by holding Command+S and turning it back on again.

Once booted into single-user mode (note that since we didn’t create a user the disk password still works), we get to add the initial user by hand. This is a more ardurous process than it is on most other unix-ish systems because Mac OS stores user accounts in a directory service that it runs locally, similar to if a Linux machine were to run a small OpenLDAP instance instead of using

passwd(5)andgroup(5)files. This service does not run in single user mode, so we need to remount the disk for writing and start it.# mount -uw / # launchctl load /System/Library/LaunchDaemons/com.apple.opendirectoryd.plistThen add the bits that comprise a user account, one at a time. This will show some errors that appear to be there because the file name from the previous command is new in version 10.14 and the

dsclcommand was never updated to address the change.# dscl . -create /Users/gholms # dscl . -create /Users/gholms UserShell /bin/zsh # dscl . -create /Users/gholms RealName "gholms" # dscl . -create /Users/gholms UniqueID 501 # dscl . -create /Users/gholms PrimaryGroupID 20 # dscl . -create /Users/gholms NFSHomeDirectory /Local/Users/gholmsUniqueID, the user’s ID number, can be any number greater than 500. The setup program normally uses 501 for the first user, so we do, too. ThePrimaryGroupIDof 20 corresponds to thestaffgroup that all local users belong to.The

/Localportion of the home directory looks a bit strange, but it is mandatory and the new user will not be able to log in if it isn’t there.Next, set a password for the new user and add that user to the

admingroup.# dscl . -passwd /Users/gholms # dscl . -append /Group/admin GroupMembership gholmsAdmin users on Mac OS are typically members of far more than just the

admingroup. Skipping them may break some things, but I don’t log in using the admin account on my machine and it has yet to cause me any issues. Just be warned that it is not quite a stock configuration.After this, create the new user’s home directory.

# install -d -o 501 -g 20 /Users/gholmsFinally, tell the system that initial setup has already run and then reboot.

# touch /private/var/db/.AppleSetupDone # rebootAfter reaching this point I unlocked the disk using the disk password, logged in as the new admin user, then created a non-admin user using System Preferences as usual.

Conclusion

Ultimately, it is still possible to continue using a disk password to boot Mac OS, but the tooling in 10.14 is far more hostile to it. To make matters worse, it does not appear to be possible to add recovery keys to such disks either:

# fdesetup changerecovery -personal Enter the user name:Apple’s intentions are certainly good — after all, they are simply trying to keep people from locking themselves out of their data by doing things that they don’t understand. Apple’s mistake in this release was to stop treating disk passwords and user passwords as equals and instead to consider disk passwords a degenerate case. That said, Apple could still make this scenario less painful without compromising this goal by making a few small tweaks:

- Allow one to add a recovery key to a volume with no users. A recovery key can only make it harder to lock oneself out because it provides an additional means of unlocking the disk.

- Allow disk passwords to unlock the disk even when user passwords are present. Again, as an additional means of unlocking the disk this can only make it more difficult to lock oneself out.

- Allow one to delete the last user on a volume when a disk password is present. Disk passwords are not normally present on the boot volume, so when one is there it is there intentionally and one should be able to use it.

- Do not insist on adding the initial user to a volume during system setup when a disk password is present. Again, when a disk password is present it is there for a reason and should be usable.

It is my hope that future releases of Mac OS will make separate FileVault disk passwords easier to manage than they are today, or at the very least, not make them more difficult. Disk passwords and user passwords are both useful use cases, and it is possible to support them equally without compromising the user experience for the majority who use only user passwords or making it easier for them to shoot themselves in the feet. Either way, at the moment this process seems to work. Documenting it is sure to help someone else out there who has the same needs as me.

-

How to Get Credentials on Eucalyptus 4.2

If you run

euca_conf --get-credentialson eucalyptus 4.2 you will see the following warning:warning: euca_conf is deprecated; use ``eval `clcadmin-assume-system-credentials`'' or ``euare-useraddkey''`There are numerous reasons for that command’s deprecation, but what causes confusion is the fact that it has two replacements. Setting up a new cloud now involves more than just one set of credentials, and if you’re used to having fully-functional credentials immediately this is likely to trip you up.

Why Change?

One of the most common complaints about

euca_confis that it tries to be everything to everybody. It combines multiple types of functionality that need to run in different places, adding excess dependencies and requiring one to log into systems that one normally shouldn’t have to. Eucalyptus 4.2 introduces new administration tools that breakeuca_conf’s functionality down into three groups with more specific purposes:- Whole-cloud administration tools

- Cloud controller (CLC) support scripts

- Cluster controller (CC) support scripts

Cloud controller and cluster controller support scripts can run only on those specific systems, and thus are only installed alongside them. The rest of the administration tools are web service clients, similar to euca2ools, that can run from anywhere. All they need are access keys.

But where do those access keys come from?

Out with the Old

In the old regime, access keys and other credentials come in the form of a zip file containing a bunch of certificates as well as

eucarc, a shell script that sets a bunch of environment variables that include service URLs and the access keys themselves. The first zip file it creates is missing several service URLs because those services have yet to be set up, and it doesn’t use DNS either because that has yet to be set up as well.Once DNS and all of the services are ready, we then have the cloud generate a new zip file. Everything seems fine until something changes for whatever reason and we need to obtain a third one. Since we can only have two certificates at a time, though, this third zip file will not include one. This causes countless problems for automation that relies on them, including eucalyptus’s own QA scripts.

That said, the zip file still has some particularly useful properties:

- It’s a single file for the administrator to e-mail to new users

- It contains both access keys and service URLs

- It (usually) contains all of the certificates needed to bundle images

A

euca2ools.inifile also has the first two of those properties, while also managing to be more flexible. Any euca2ools commands that can create access keys, such as euare-useraddkey and euare-usercreate, can generate euca2ools.ini files automatically. That leaves just certificates, which we dealt with by making them all optional or possible to obtain automatically.In with the New

In isolation, euca2ools commands alone have a chicken-and-egg problem: they require access keys to run, but a new cloud doesn’t have any access keys. We break this loop by splitting eucalyptus installation into two phases, each with different credentials.

Setup Credentials

A cloud controller support script,

clcadmin-assume-system-credentials, provides temporary setup credentials. This script works similarly toeuare-assumerole, but it is much more limited and it only works on a cloud controller. Setup credentials cannot be used for normal system operation; they provide access only to service registration, service configuration, and IAM services – the minimum necessary to get up and running with euca2ools.# eval `clcadmin-assume-system-credentials` # euserv-register-service -t user-api -h 198.51.100.2 ufs-1 # euctl system.dns.dnsdomain=mycloud.example.comAdmin Credentials

Once DNS and an IAM service are set up, you can use euca2ools to create long-lived admin credentials that let you access the cloud’s full functionality. It is these credentials that are the replacements for the zip file. Once you create them, you are unlikely to ever need setup credentials again.

# euare-usercreate -wld mycloud.example.com gholms > ~gholms/.euca/mycloud.iniHere is an explanation of the various parts of that command:

gholms: Create a user named gholms-w: Write out a euca2ools.ini file-l: In that file, make that user the default for this cloud-d mycloud.example.com: Use the domainmycloud.example.comas the cloud’s DNS domain

Normally, when this command writes a configuration file it will pull the DNS domain from the IAM service’s URL, but since this is the very first user we have to supply it by hand because it has not yet been set.

If you are interested in using eucalyptus’s administration roles instead of full-blown admin credentials, you would create a new account here and add it to whatever roles you need.

What now?

Once you have a set of admin credentials you can use this for day-to-day cloud administration the same way you would with a classic

eucarcfile.$ export AWS_DEFAULT_REGION=mycloud.example.com $ euare-accountcreate -wl alice > alice.ini $ mail -s "Try out this shiny, new cloud" -a alice.ini ...You can change the name of the region in the configuration file if you want. The domain name is simply the default.

-

Useful Git Commands: a Shortcut for GitHub Pull Requests

Normally, when someone asks me to merge something in git I need to add his or her repository using

git remote add, fetch the branch I need, and then merge it. When someone submits a pull request to a project hosted on GitHub, however, GitHub additionally publishes it as something I can fetch from my own repository:$ git ls-remote origin | grep pull/6 03d7fb7af91a74bb7658a0742fa68bfeb5d50a3f refs/pull/6/head f8ebbc62555143019947f1255b064cda38fd239f refs/pull/6/merge 8a42021dbabcfc222b4d47c5ada47e344154d934 refs/pull/60/head f4d8052000601e59e4e7d4dec4aa4094df4e39a0 refs/pull/61/head 8a519986f5b59721692ec75608edf0f404f88e87 refs/pull/62/head 9c03e723e8b50ca56a1257659d686a68a69e6e40 refs/pull/62/merge f3a983c6fc3ff236c2bc678cfec3885da609f79a refs/pull/63/head e7953c21a77fb37fb7158dd87fc0c156dd8f97ae refs/pull/63/mergeWith this I can use one line of configuration to create a convenient shortcut that lets me immediately check out any pull request:

$ git config --add remote.origin.fetch "+refs/pull/*/head:refs/remotes/origin/pull/*" $ git checkout pull/63 Branch pull/63 set up to track remote branch pull/63 from upstream. Switched to a new branch 'pull/63'

-

Useful Git Commands: URL Rewriting

People have to use SSH or HTTPS to push to GitHub, but when fetching one can use git’s own network protocol because it is generally faster. You can make a specific repository on your machine use SSH only for pushing by cloning it with the faster

git://URL and running something like this:$ git config remote.upstream.pushurl git@github.com:gholms/botoThat works nicely, but you have to do it once for every single repository you want to interact with. That quickly becomes annoying. Thankfully, you can leverage git’s URL-rewriting mechanism to make this easier:

$ git config --global url.git://github.com/.insteadOf github: $ git config --global url.git@github.com:.pushInsteadOf github:This adds two new rules to your git configuration:

- If a URL starts with

github:then replace that withgit://github.com/. - If a URL starts with

github:and you are pushing then replace that withgit@github.com:.

After you do that you can simply use URLs like

github:gholms/botowhen cloning. They will get rewritten togit@github.com:gholms/botowhen pushing, andgit://github.com/gholms/botothe rest of the time, speeding things up without creating additional work in the future.This should work if you prefer HTTPS for pushing to GitHub, or if you use other servers, too. Just tweak the commands.

- If a URL starts with

-

Useful Git Commands: Summarizing Lots of Log Entries

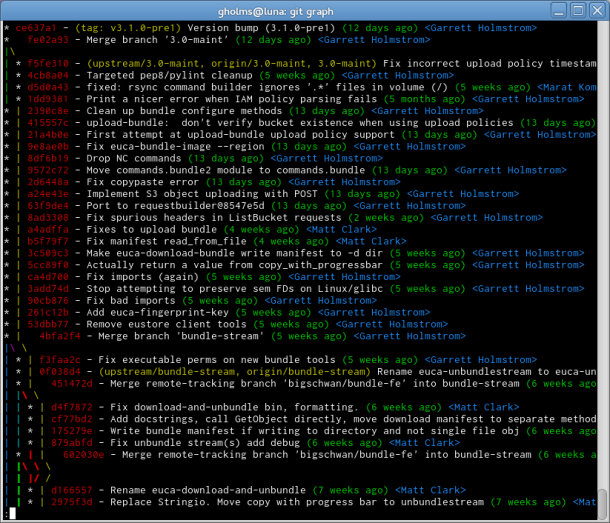

When looking for a summary of a git repository’s history, the output of

git logisn’t always as informative as one might like. It displays every commit in chronological order, which effectively hides the changes that merges bring in. It is also quite verbose, showing complete log messages, author info, commit hashes, and so on, drowning us with so much info that only a few commits will fit on the screen at once. After supplying the command with the right cocktail of options, though, its output becomes a significantly better summary:

The output above came from a command that is long enough that I made an alias, for it,

git graph, in my~/.gitconfigfile:[alias] graph = log --graph --abbrev-commit --date=relative --pretty=format:'%Cred%h%Creset -%C(yellow)%d%Creset %s %Cgreen(%cr) %C(blue)<%an>%Creset'Don’t forget that

git logaccepts a list of things to show logs for as well, so if you want to look at the logs forbranch-1andbranch-2you can simply usegit graph branch-1 branch-2to make them both show up in the graph.